Search in:

Filter By:

-

EMA | AC25 Austin, Texas

16-20Sept -

UBIQ at ISBI 2025

26Sept -

COBIS: 43rd Annual Conference 2025

10-12MAY -

UBIQ sponsors CASE-NAIS 2025

26-28JAN -

Get Ready for BBCON 2024!

24-26SEPT -

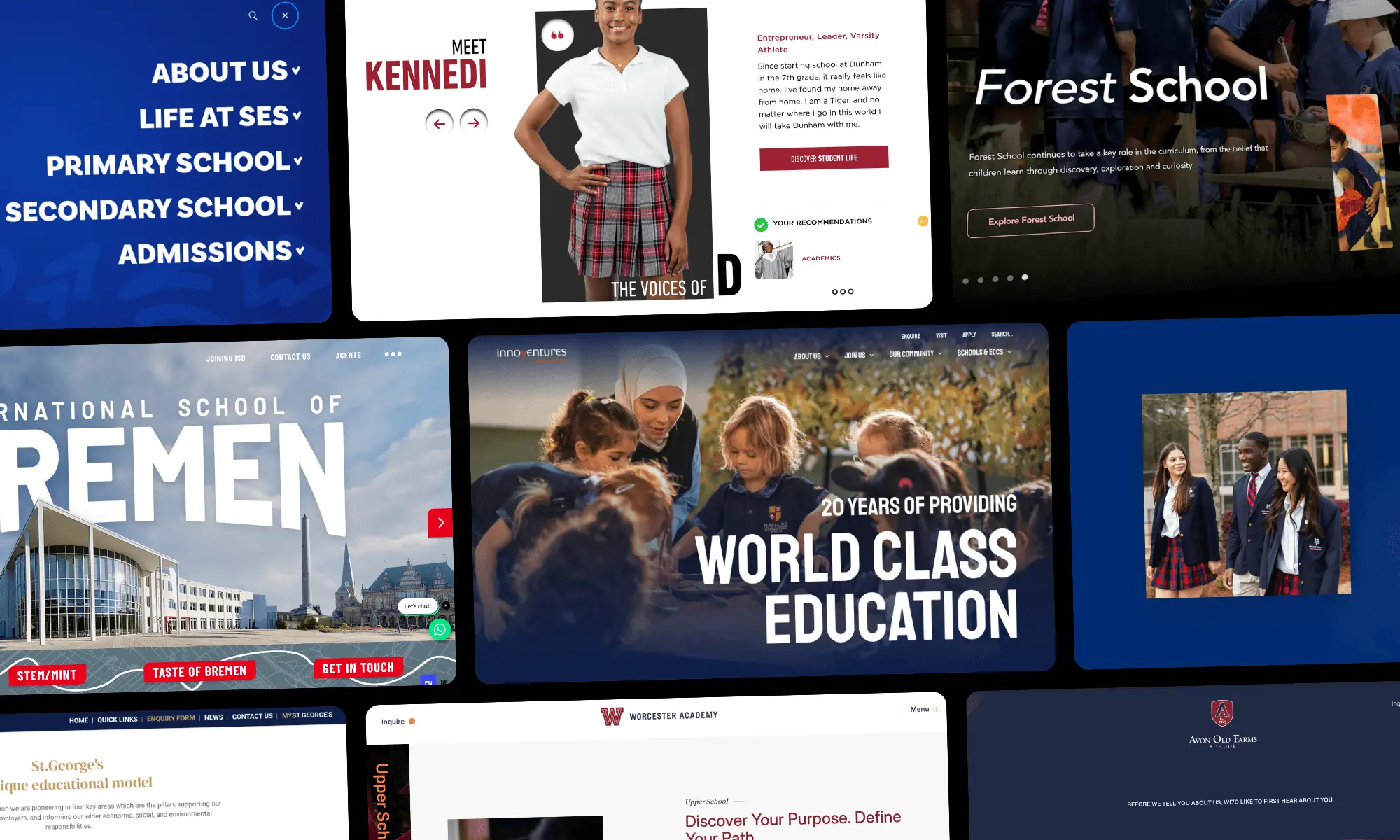

Blackbaud Makes a Strategic Investment in UBIQ

Press

Blackbaud’s $5m partnership with UBIQ will enable K-12 private schools to create digital-first experiences to modernize the admissions process.